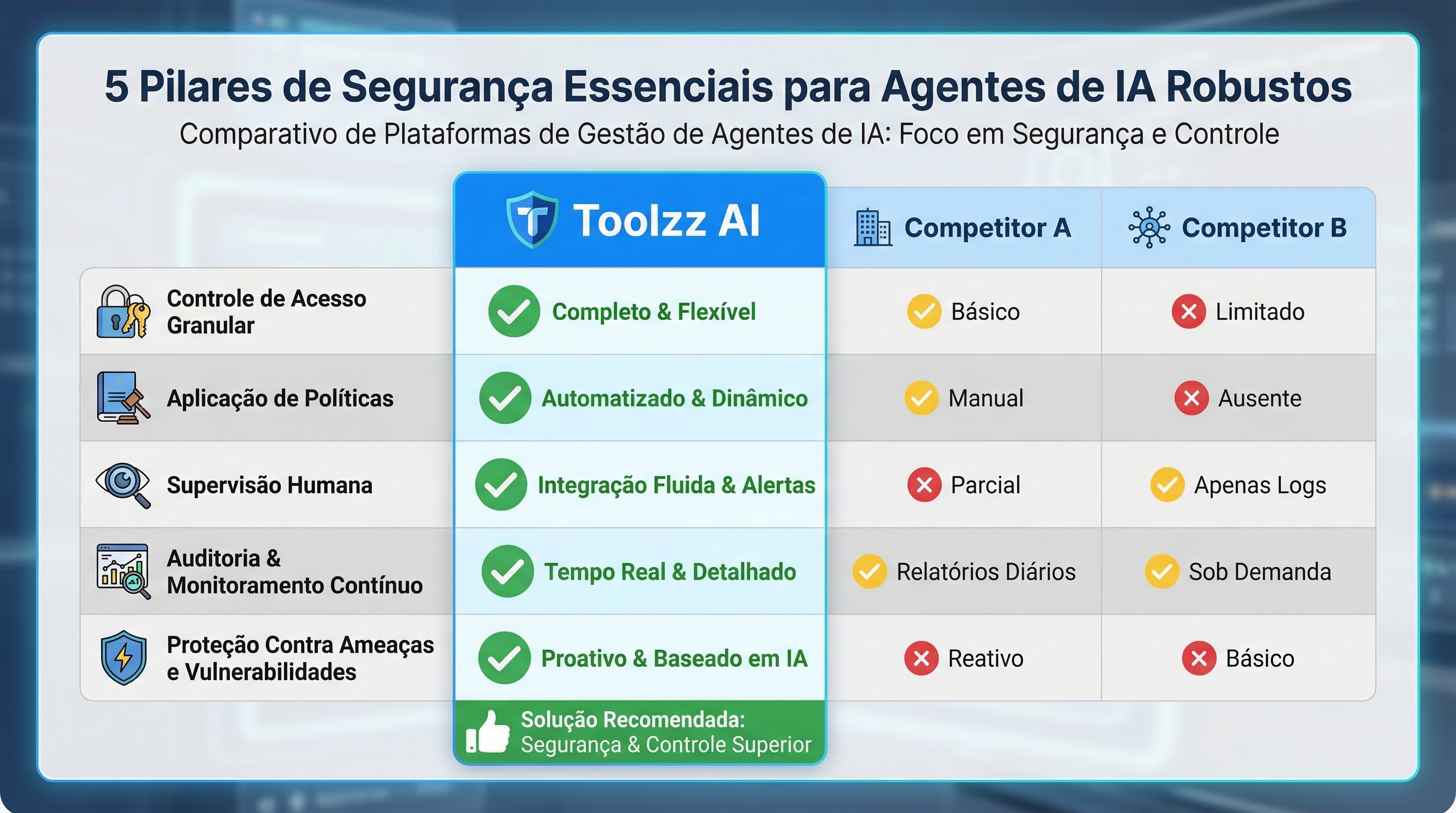

5 Essential Security Pillars for Robust AI Agents

An exploration of five essential security patterns for building robust and reliable AI agents, covering access control, autonomy limits, interface protection, secure execution, and audit transparency.

March 15, 2026

5 Essential Security Pillars for Robust AI Agents

With the growing adoption of AI agents in various areas, from customer service to the automation of complex processes, security becomes a central concern. Autonomous agents, by their very nature, require a different security approach from the traditional one, focused not only on data protection, but also on controlling their actions and behaviors. This article explores five essential security patterns for building robust and reliable AI agents.

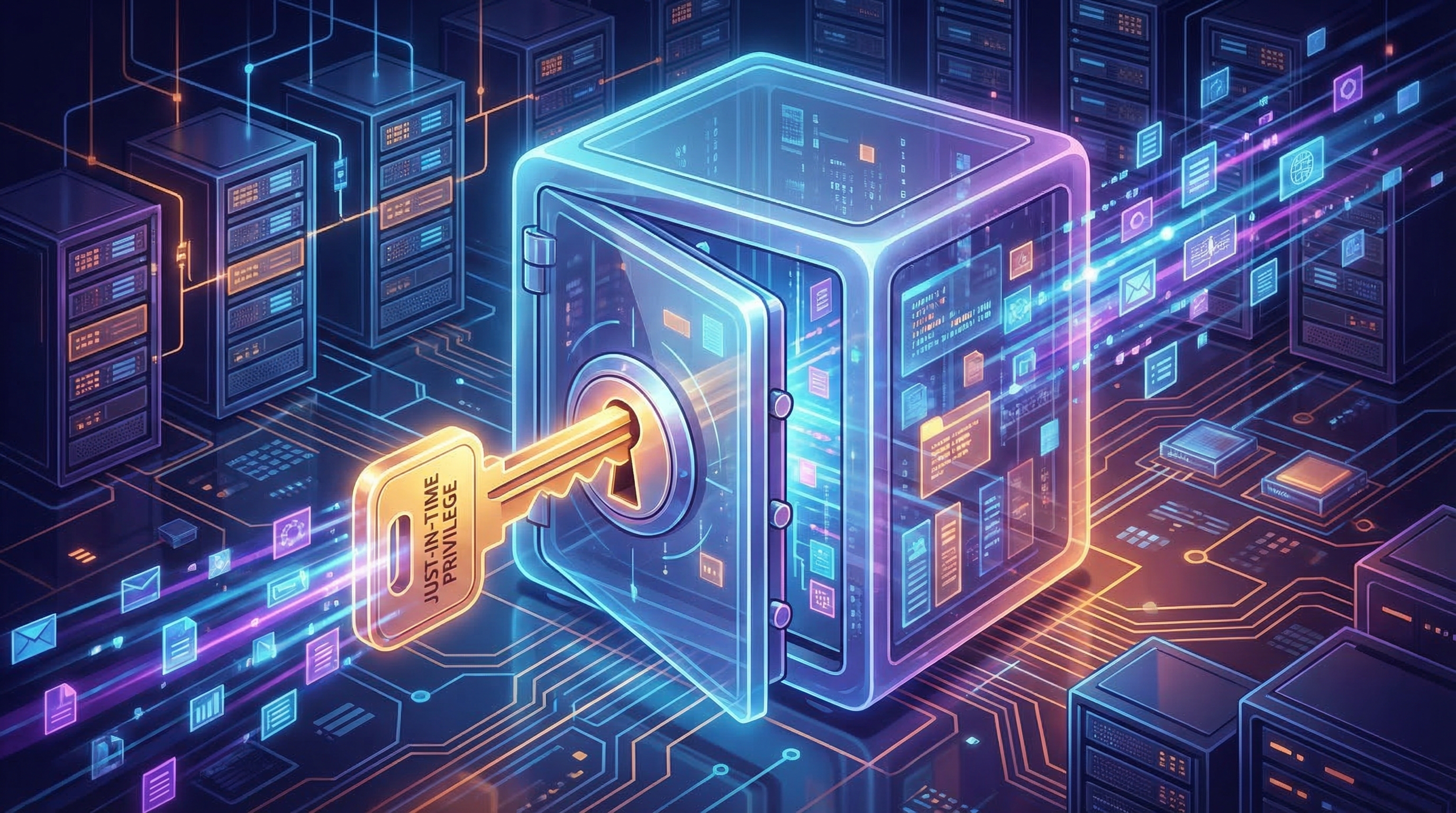

Just-in-Time Tool Privileges: On-Demand Access

The Just-in-Time (JIT) privileges model is a fundamental shift in how we grant access to resources for AI agents. Instead of granting permanent permissions, JIT offers limited and temporary access only when necessary. This drastically minimizes the attack surface and limits potential damage in case of compromise. Imagine an AI agent responsible for generating financial reports. Instead of having unrestricted access to all financial data, it receives temporary and specific access to the data needed to generate the report, and automatically revokes that access after the task is completed.

In practice, this can be implemented through short-lived access tokens, policy-based authentication, and granular role-based access control. Platforms like Toolzz AI facilitate the implementation of this pattern, allowing the creation of agents with specific and controlled permissions, ensuring they only have access to what they need to perform their tasks.

Bounded Autonomy: Autonomy with Limits

Autonomy is one of the main benefits of AI agents, but it can also be a source of risk. The concept of Bounded Autonomy aims to balance freedom of action with the need for control. This means defining clear limits for what an agent can do without human supervision. For example, a customer service agent can be authorized to answer frequently asked questions and resolve simple problems, but any request involving financial transactions or confidential information must be forwarded to a human agent.

Implementing Bounded Autonomy requires defining clear policies and integrating human approval mechanisms at critical points in the process. Agent orchestration tools, such as those offered by Toolzz, can assist in defining and enforcing these policies, ensuring that agents operate within safe boundaries.

The AI Firewall: Protecting the Interface

Just as traditional firewalls protect computer networks, an AI Firewall protects AI agents against attacks directed at their interface. This involves filtering input prompts to detect and block prompt injection attempts (manipulation of input to obtain unwanted results) and validating generated responses to ensure they don't contain confidential information or offensive content.

An effective AI Firewall uses natural language processing (NLP) techniques and machine learning to identify suspicious patterns and block or modify malicious prompts and responses. Toolzz AI incorporates AI firewall components to protect agents, ensuring the security and quality of interactions.

Execution Sandboxing: Secure Isolation

AI agents that execute dynamically generated code, such as Python scripts or SQL queries, represent a potential risk. If this code is malicious, it can compromise the underlying system. Execution Sandboxing offers a solution to this problem, executing code in an isolated environment where it cannot access critical system resources or cause damage.

Sandboxing can be implemented using containers, virtual machines, or restricted execution environments. By executing code in a sandbox, you limit the impact of any potential vulnerability or attack, ensuring system integrity. Toolzz allows you to create no-code chatbots and AI agents without worrying about code execution security, as the platform offers an isolated and secure environment for task execution.

Want to know more about how to protect your AI agents? Schedule a Toolzz AI demo and see how we can help.

Immutable Reasoning Traces: Audit and Transparency

The ability to trace and audit an AI agent's reasoning is crucial for ensuring accountability and transparency. Immutable Reasoning Traces involve recording all steps of the agent's decision-making process, including input data, applied rules, and decisions made. These records must be immutable, that is, they cannot be altered or deleted, ensuring audit integrity.

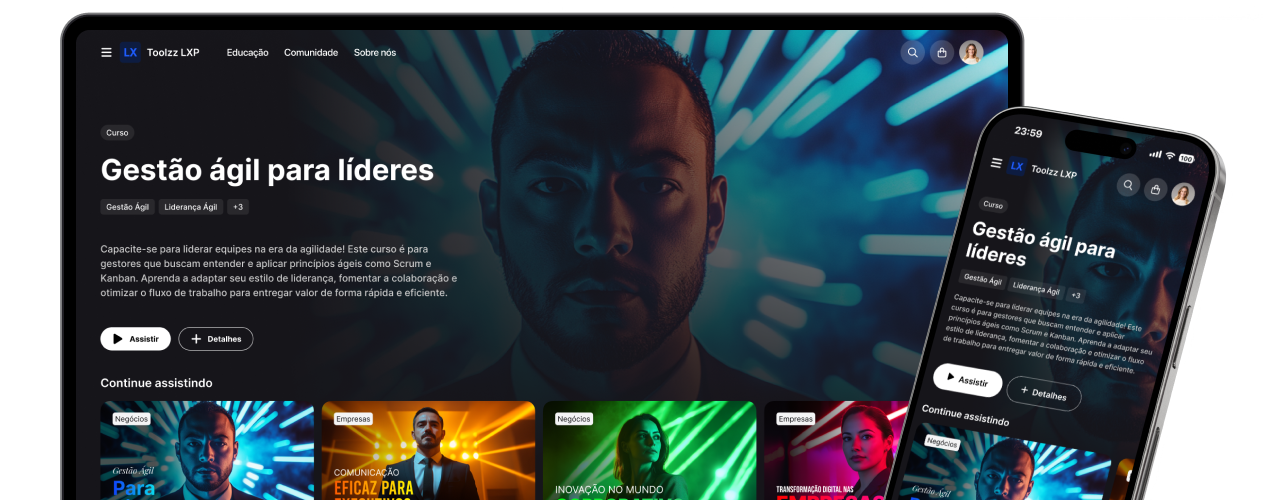

These records allow you to investigate incidents, identify biases, and track the evolution of the agent's behavior over time. Toolzz LXP can be integrated with AI agents to record and analyze their decision-making processes, providing valuable insights for continuous improvement and compliance assurance.

Conclusion

AI agent security is a complex challenge that requires a multifaceted approach. The five patterns discussed – Just-in-Time Tool Privileges, Bounded Autonomy, AI Firewall, Execution Sandboxing, and Immutable Reasoning Traces – provide a solid foundation for building robust and reliable agents. By implementing these patterns and using tools like Toolzz, companies can maximize AI's potential while minimizing associated risks. Security is not an impediment to innovation, but rather an essential component for long-term success.