How to Create a Profanity Filter That Actually Works

A comprehensive guide on building an effective profanity filter using sanitization, Trie data structures, allow-lists, and AI/ML integration to moderate online content while avoiding common pitfalls like the Scunthorpe Problem.

How to Create a Profanity Filter That Actually Works

March 15, 2026

Content moderation is a growing challenge for companies of all sizes. An effective profanity filter is crucial for maintaining a safe and respectful online environment, but building one that actually works can be more complex than it seems. A simple list of banned words is easily circumvented, requiring a more sophisticated approach to identify and block inappropriate content.

The Limitation of Banned Word Lists

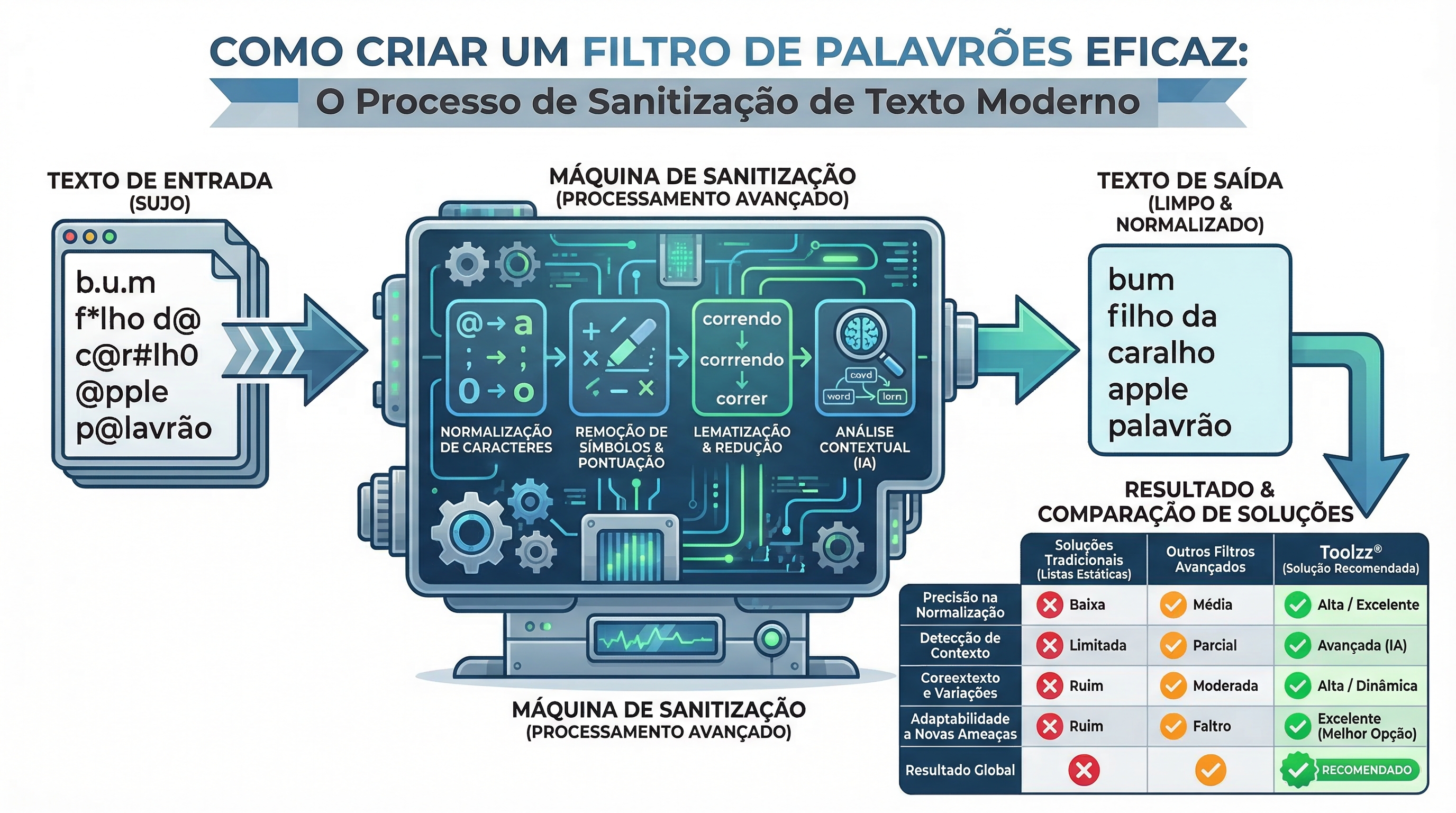

The most basic – and least effective – method is to create a list of offensive words and use a simple search to identify them. However, this approach quickly fails. Creative users find ways to evade detection by replacing letters with numbers (leet speak), inserting special characters, or using spelling variations. A robust filter needs to go beyond this superficial approach.

Sanitization: The First Line of Defense

The first step in building an effective profanity filter is sanitization. This process involves normalizing the input text, removing irrelevant characters and converting common variations into their standard forms. For example, "b.u.m" or "b@m" would be converted to "bum". This drastically reduces the need for an excessively long and complex banned word list.

// Conceptual sanitization example

const map = { '@': 'a', '0': 'o', '1': 'i', '3': 'e', '5': 's' };

const clean = input.toLowerCase()

.replace(/[^a-z0-9]/g, '') // Remove symbols

.split('').map(c => map[c] || c).join('');Tries: The Ideal Data Structure

After sanitization, choosing the right data structure to store and search for banned words is crucial. A Trie (also known as a prefix tree) is an excellent option. Unlike a simple list or hash map, a Trie allows efficient prefix searching, which means it can identify banned words even when they are embedded within other words.

A Trie offers search complexity of O(L), where L is the length of the input string, making it significantly faster for large volumes of text compared to the O(N²) approach of a hash map.

Solving the "Scunthorpe Problem"

The "Scunthorpe Problem" occurs when a banned word appears as part of a legitimate word (for example, "bum" in "bumpy"). To solve this, an additional validation process is necessary. After identifying a match in the Trie, check whether the corresponding character sequence is contained in an allow-list. This step prevents false positives and ensures that harmless words are not blocked.

Artificial Intelligence and Machine Learning

While Tries are excellent for identifying explicit words, they cannot detect language nuances such as sarcasm or implicit hate speech. This is where artificial intelligence (AI) and machine learning (ML) come into play. AI models can analyze the context of a sentence to determine its toxicity, even if it doesn't contain specific banned words.

However, using ML comes at a cost: inference is computationally expensive and slow. A common approach is to use the Trie as a first-line filter and route only suspicious messages to the ML model for deeper analysis. This optimizes performance and minimizes latency.

Want to know how AI can help you moderate content efficiently? Request a demo of Toolzz AI and see how to optimize your processes.

Toolzz Bots: The Complete Solution for Content Moderation

Implementing an effective profanity filter can be complex and time-consuming. Toolzz Bots offers a complete and scalable solution for content moderation. With Toolzz Bots, you can create intelligent chatbots that use Tries, allow-lists, and optionally, integration with AI models to detect and block inappropriate content in real-time. Additionally, the platform offers analytics features to monitor filter effectiveness and adjust it as needed.

With Toolzz Bots, you can ensure a safe and pleasant online environment for your users without compromising performance or scalability. To get started, schedule a demo and discover how Toolzz Bots can transform content moderation in your company.

Conclusion

Building a robust profanity filter requires a layered approach that combines sanitization, efficient data structures (such as Tries), and when necessary, the power of artificial intelligence. By implementing these techniques, you can protect your online community from inappropriate content and create a safer and more welcoming environment for everyone. Toolzz Bots offers a complete and easy-to-use solution for content moderation, allowing you to focus on what really matters: growing your business.