Uncontrolled AI Access: The Invisible Risk for Businesses

This post explores how uncontrolled access by AI agents to sensitive data creates a growing security challenge for businesses, and provides best practices for responsible AI deployment and governance.

Uncontrolled AI Access: The Invisible Risk for Businesses

March 17, 2026

With the rapid adoption of artificial intelligence, businesses face a new security challenge: uncontrolled access by AI agents to sensitive data. The proliferation of service accounts, combined with the complexity of permissions in cloud environments, creates a scenario where risk accumulates silently, often undetected by traditional security tools.

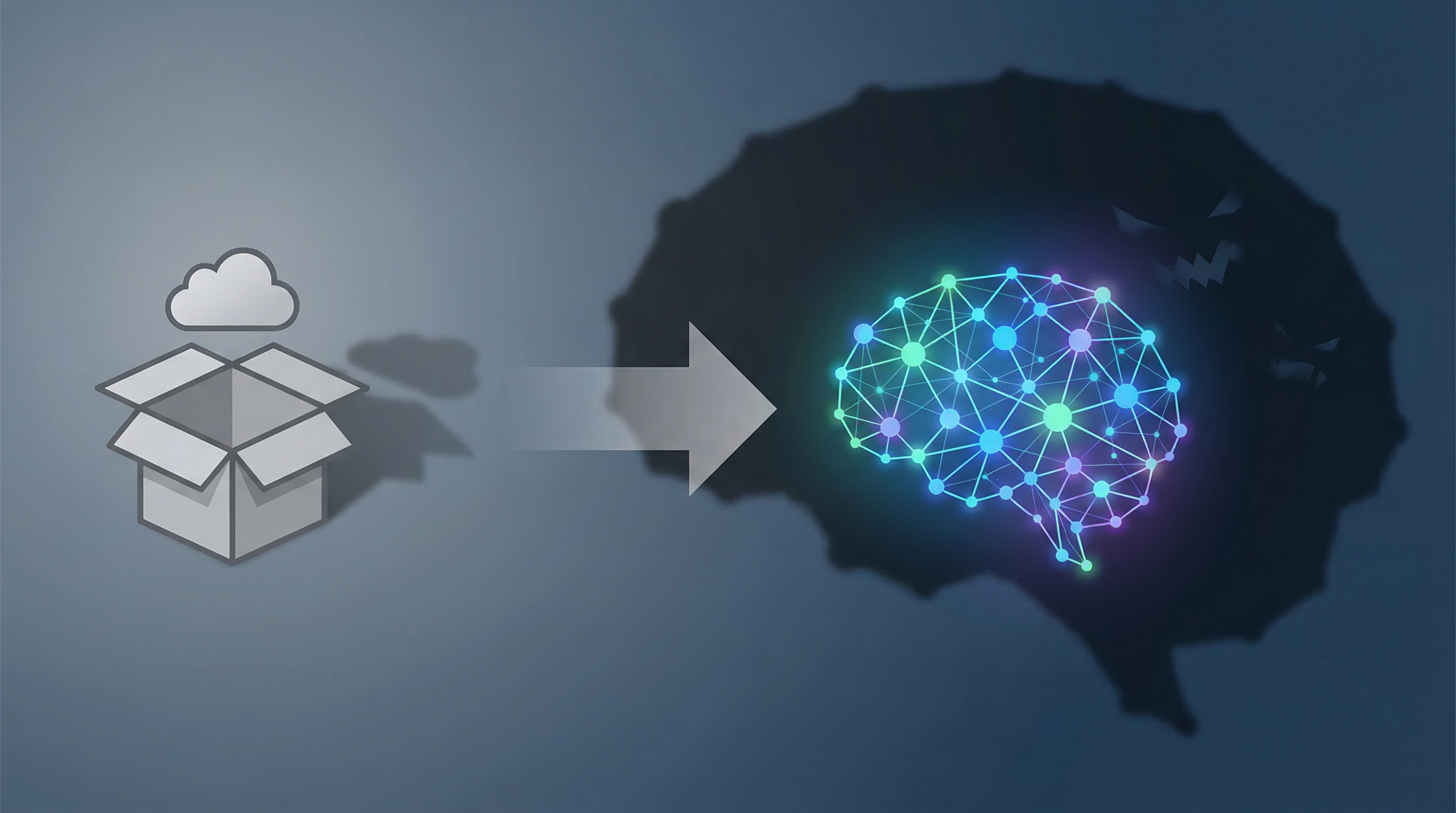

The Evolution of Shadow IT: From Dropbox to AI

The concept of Shadow IT is not new. Initially, it referred to the use of unauthorized applications and services by employees. However, with the rise of AI, Shadow IT has evolved into something more insidious: unmanaged access and autonomous action by AI agents. It's not just about unauthorized data storage, but rather the ability of these agents to access, process, and act on confidential information without proper oversight.

Practical Examples of Uncontrolled Access

In many organizations, it's common to see scenarios such as: a test integration of an AI coding assistant that remains active with unrestricted access to the code repository; a business analyst who automates tasks with personal credentials, creating a workflow that continues to run even after leaving the team; or agent frameworks that store API keys in conversation logs. These seemingly harmless cases can open significant security breaches in the company.

The problem is that these accesses rarely appear in identity and access management (IAM) dashboards. Data loss prevention (DLP) policies may not identify AI agent activities as abnormal. The result is growing risk, often invisible to security teams.

Concerned about your AI security?

Request a Toolzz AI demonstrationThe Problem of Non-Human Identities

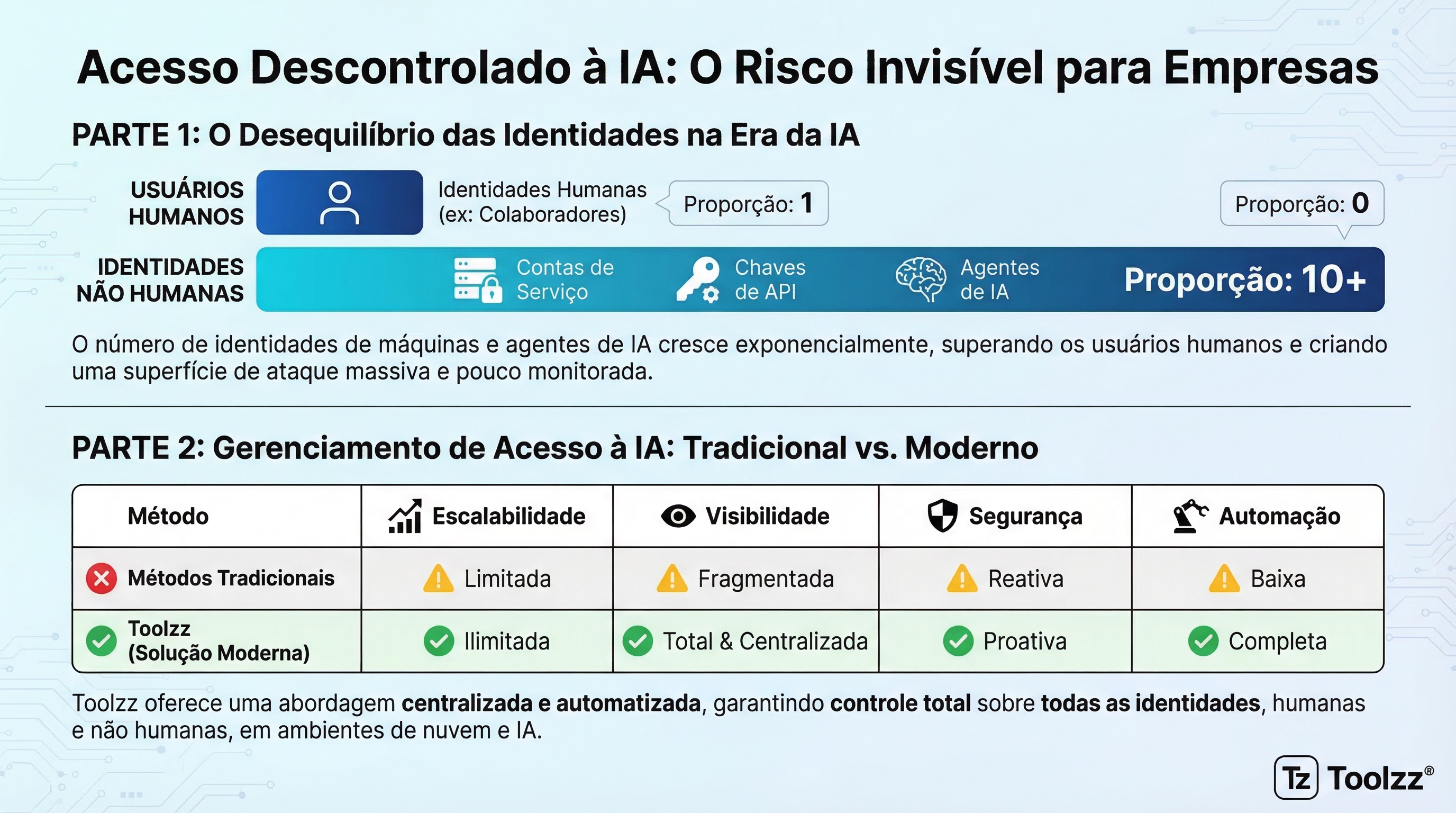

Non-human identities – service accounts, workload identities, API keys, and increasingly, AI agents – already outnumber human users in most companies, at a ratio that can reach 10:1. Managing these identities is a complex challenge, especially because they require a different governance model than the one applied to human users. Unlike users, non-human identities don't need passwords or multi-factor authentication, but they still need controlled access and constant monitoring.

Access as a Control Plane for AI Trust

Access governance is fundamental to ensuring trust in AI. It's not enough to ensure that AI models are aligned with company values and that output is filtered. It's necessary to control what AI agents can actually do, limiting their access to data and systems. The approach must evolve from "is this identity authorized?" to "is this access pattern consistent with what this identity should be doing now?". To ensure this governance and control, you can learn about Toolzz AI solutions.

Practices for Responsible AI Agent Deployment

To mitigate the risks associated with uncontrolled access by AI agents, it's essential to adopt some recommended practices:

- Permission scoping at deployment: Define the minimum access necessary for each AI agent to perform its task and block deployment until these permissions are granted.

- Joint identity modeling: Manage human and non-human identities consistently, with the same policies and processes.

- Continuous monitoring: Establish behavior baselines for each agent and detect anomalies that may indicate improper access or compromise.

- Access modeling before deployment: Assess the impact of new integrations and AI agents before putting them into production.

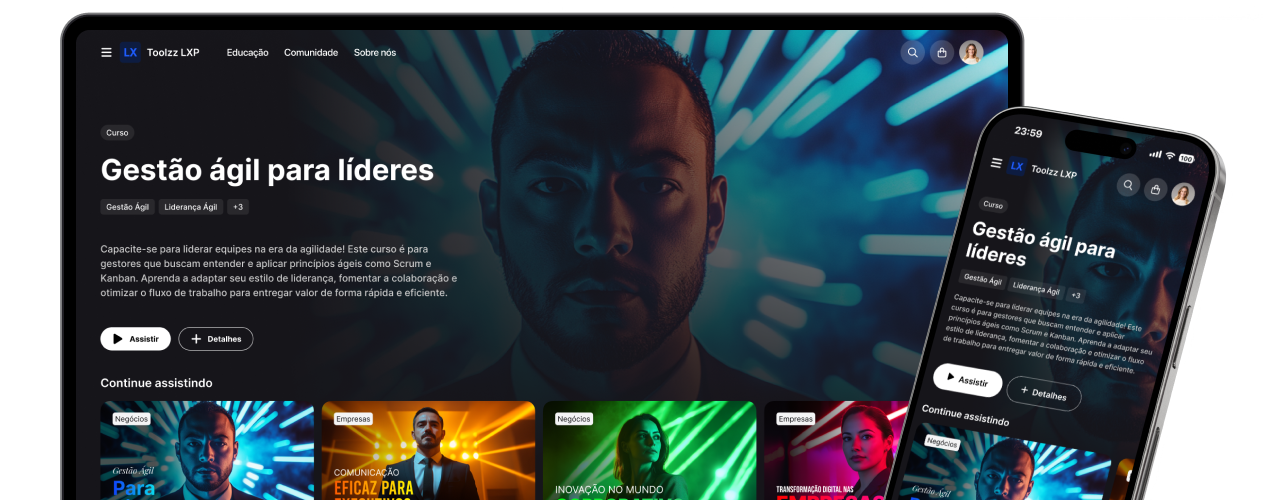

Platforms like Toolzz AI offer advanced features for identity management and access control, allowing companies to monitor and restrict access by their AI agents in real time. Additionally, Toolzz LXP can assist in raising awareness and training employees on AI security best practices.

Want to empower your team to handle AI challenges? Explore Toolzz LXP resources and promote a culture of security and continuous learning.

Conclusion

Uncontrolled access by AI agents represents a growing risk to business security. By adopting a proactive approach and implementing recommended practices, companies can significantly reduce their exposure and ensure that AI is used responsibly and securely. Ignoring this problem can lead to data breaches, financial losses, and reputational damage. AI security is not just a technical issue, but also a management responsibility.

See how easy it is to create your AI

Click the arrow below to start an interactive demonstration of how to create your own AI.